Pathic MR

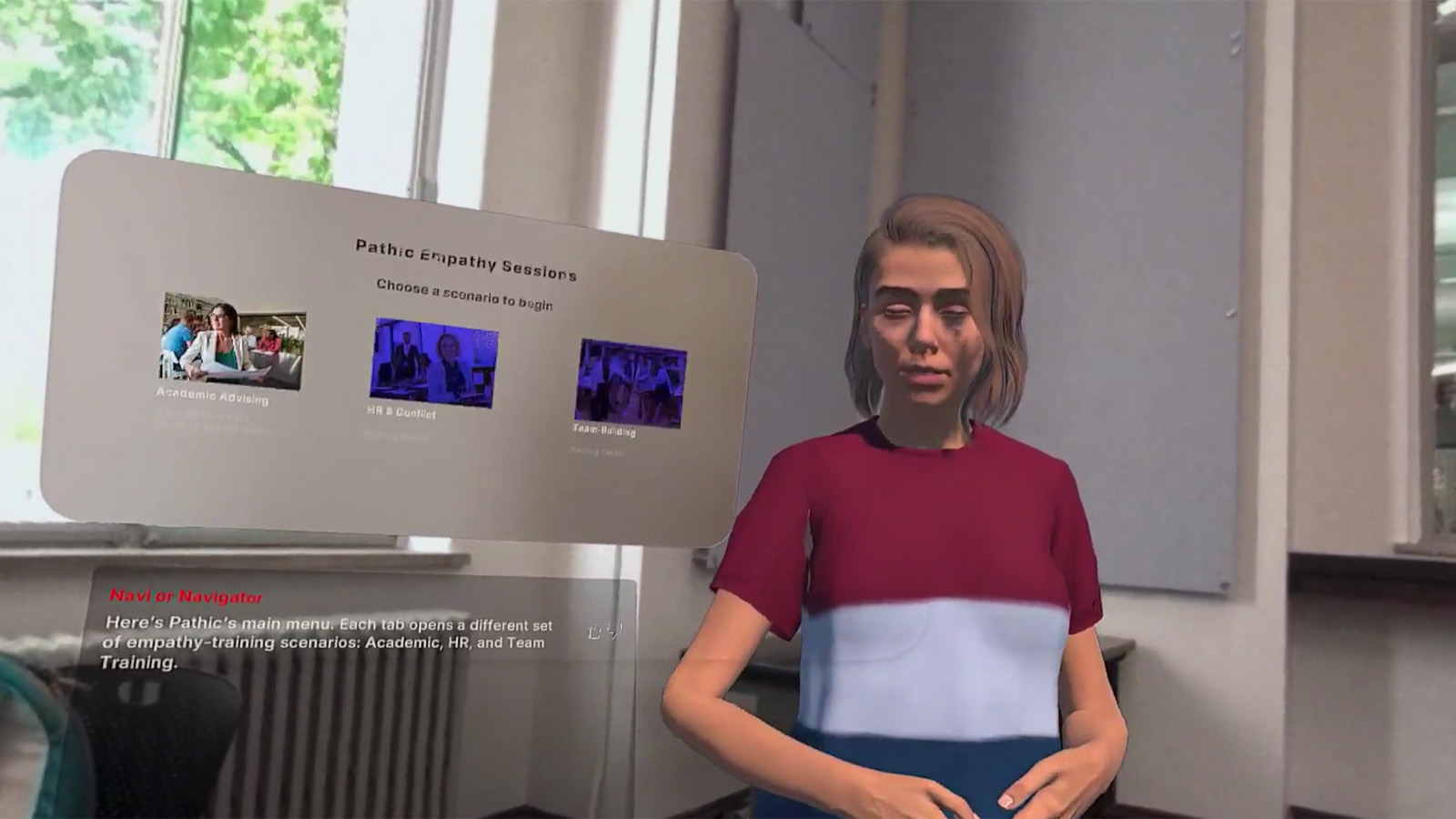

Pathic MR is a mixed reality empathy-training system that helps people practice emotionally difficult conversations through embodied perspective-taking, role reversal, and AI-guided dialogue.

Pathic transforms abstract empathy concepts into embodied experiences. Users do not just learn about perspective-taking; they step into another person’s position, navigate conflict from multiple viewpoints, and reflect on how emotion, tone, and assumptions shape the outcome.

This is not just a prototype of an interface. It is a prototype of behavior change.

This project represents the convergence of spatial computing, conversational AI, learning experience design, and human-centered research to address a persistent problem: people know empathy matters, but most current training does not create the emotional realism or practice conditions needed for behavior change.

Project Snapshot

- • Problem: Conflict training is often abstract, emotionally flat, and easy to ignore

- • Solution: Mixed reality role-swapped simulations with AI-driven dialogue and guided reflection

- • Team: Sejal Shail (UX Research) + Blessing Mangwiro (Product Design / XR Development)

- • Outcomes: 14+ interviews, 2 conflict narratives, 10+ interaction flows

- • Learning Lens: Emotional awareness, reflection, transfer, and safe practice

"Almost all stakeholders identified the critical importance of stepping into the other's shoes."

- Research Finding

Why This Market Is Ready Now

The corporate soft-skills training market exceeds $1.8B annually in the US, with empathy and conflict resolution consistently ranking among the top L&D priorities. Remote and hybrid work has made interpersonal tension harder to detect and easier to ignore - accelerating demand for scalable, asynchronous conflict training that doesn't require a live facilitator.

Meanwhile, enterprise MR hardware costs have dropped 60%+ since 2021. Meta Quest 3 business deployments are now viable for L&D budgets that previously couldn't touch VR. The window for an embodied, AI-driven empathy training product has materially opened in the last 18 months.

This was a collaborative project, and that collaboration is explicit here. I worked with Sejal Shail on the research foundation and learning direction of Pathic. Sejal’s contributions around workplace conflict, emotional awareness, reflective practice, and progress-oriented learning are integrated into this page as part of the project story rather than hidden in the background.

- Project Type Collaborative Mixed Reality Learning Prototype

- Timeline Mar 2025 – May 2025 • 10 weeks

- Team 1 UX Researcher: Sejal Shail • 1 Product Designer: Blessing Mangwiro

- My Role Research planning, interviewing, storyboarding, user flow design, mixed reality interaction mapping, prototype direction

- Tech Stack Unity, Meta Quest 3, XR Interaction Toolkit, Convai AI

- Research 14–15 stakeholder interviews across managers, HR staff, professors, doctors, and employees

- Status Research Prototype Complete

What This Project Demonstrates

- • Designing AI systems for behavior change, not just interaction

- • Translating research into embodied learning environments

- • Building voice-first XR interfaces with emotional context

- • Structuring products as learning loops: practice → reflection → transfer

Collaboration with Sejal Shail

Sejal’s research framing strengthened the project in four important ways: clearer articulation of workplace conflict as the initial high-value use case, greater emphasis on emotional awareness and psychological safety, stronger reflection and progress-tracking concepts, and clearer participant segmentation across managers, HR, employees, and organizational support roles.

I translated those insights into product structure, XR interaction flows, scenario design, and the spatial conversation model. This page explicitly shows that collaboration rather than flattening it into a single-author story.

Sejal’s Added Value

- • Workplace-conflict framing instead of vague empathy positioning

- • Progress tracking and follow-up learning concepts

- • Stronger emphasis on emotional awareness and safe practice

- • Participant framing around managers, HR, employees, and psychologists

- • Clearer articulation of Pathic as an accessible training tool

What I Drove

- • Translating research into an XR learning experience

- • Role-shift mechanics and interaction flow architecture

- • Voice-first MR conversation structure

- • Scenario framing and embodied perspective-taking logic

- • Productizing the system into a coherent learning platform

Learning Experience Design Principles

I wanted this project to read clearly as learning experience design, not only XR prototyping. The product structure is grounded in verifiable learning principles: psychologically safe practice, active participation, reflection, feedback, transfer, and repetition.

Principle 1: Safe Practice Before Real Stakes

Pathic creates a low-risk rehearsal space. This supports deliberate practice: learners can try difficult conversations, make mistakes, and recover without damaging real relationships.

Principle 2: Perspective-Taking Through Embodiment

Role-switching converts empathy from abstract instruction into lived experience. This supports stronger emotional encoding than static lecture or scripted role-play alone.

Principle 3: Reflection Drives Learning Transfer

Reflection is built into the system because learning does not come from immersion alone. Debrief prompts help learners notice emotion, intent, and communication patterns so the experience can transfer beyond the headset.

Principle 4: Reduce Cognitive Load, Increase Relevance

Voice-first interaction, scenario specificity, and guided prompts reduce interpretive overhead so learners can focus on emotional signals and decision-making instead of interface mechanics.

What Makes This a Learning Product

- • It teaches through action, not only explanation

- • It uses reflection and repetition instead of one-off spectacle

- • It supports skill transfer into real conversations

- • It is structured as a loop: experience → debrief → retry → improve

Core Capabilities

Pathic MR is a mixed reality empathy training platform built on Meta Quest 3 that uses spatially anchored AI characters, emotionally responsive dialogue, and role-shifting mechanics to create genuine perspective-taking experiences.

Spatially Anchored AI

AI characters powered by Unity and XR Interaction Toolkit, positioned in meaningful spatial relationships with the user.

Emotional Dialogue Engine

Convai-powered responses with custom emotion-scaffolding prompts for empathetically intelligent dialogue.

Role-Shifting System

Experience conversations as yourself or as the other person, creating emotional understanding that changes how users respond in real conversations, not just how they think about them.

360° Scene-Based Scenarios

Empathy scenarios grounded in real conflict patterns across workplace, academic, and relationship contexts.

Gaze-Triggered Transitions

Real-time narrative transitions based on where users look, enabling natural interaction flow without constant controller reliance.

Voice-First UX

Hands-free, embodied interaction enabling natural conversation without breaking immersion.

Problem Landscape

Workplace conflict is often avoided rather than addressed. Misunderstandings, emotional tension, and unspoken frustrations quietly build up across teams. These unresolved conflicts reduce collaboration, lower morale, and damage trust.

People often struggle to see another person’s perspective when emotions escalate — exactly the moment when perspective-taking matters most.

The gap between knowing you should empathize and actually experiencing another person’s point of view creates a persistent barrier to conflict resolution, learning, and growth.

Why Current Training Fails

- • Role-playing exercises feel artificial

- • Theoretical discussions do not create emotional memory

- • Organizations lack real-time practice tools

- • Static scripted tools focus on outcomes, not awareness

- • Learners experience high cognitive load and poor guidance

- • Conflict conversations are avoided or delayed instead of practiced

"A lot of conflicts just come from people misunderstanding each other’s tone or intent. Emotions get lost in translation."

- Interviewee 1

"Training works only when it feels real, not theoretical."

- Stakeholder Research

Goal

Create an immersive way to train emotional perspective-taking in workplace conflicts so people can build empathy, confidence, and better conflict habits through interactive mixed reality experiences.

Research & Discovery

The research foundation combined literature review, stakeholder interviews, scenario analysis, and iterative prototype thinking to understand both the emotional problem space and the design requirements for MR-based empathy training.

Research Methods

- • Literature review across HCI, spatial computing, empathy research, and learning

- • 30–60 minute semi-structured interviews with diverse stakeholders

- • Broad exploratory interviews before narrowing the Pathic focus

- • Competitive analysis across conflict-resolution and wellness tools

- • Mid-fidelity user flows for scenario entry, role-switching, and debrief

Key Findings

- • People often keep conflict to themselves because they fear judgment or escalation

- • Emotional cues are frequently lost or misread

- • Existing tools often create dependency on facilitators

- • Generic content increases frustration and inaccessibility

- • Role-switching makes complex conversations easier to navigate

- • MR scenarios engage users physically and emotionally, improving retention

Research Themes That Shaped Design

Pathic must support emotional awareness, not only problem-solving.

The product should encourage independent conflict-resolution skills rather than overreliance on external help.

Reflection and follow-up matter if learning is supposed to persist beyond the headset.

Personas & Scenarios

Stakeholder research revealed multiple user archetypes, each facing different conflict dynamics and learning needs.

The Overwhelmed Student

Struggles with academic pressure and power dynamics in advising situations. Needs empathetic communication that goes beyond policy and time pressure.

Goal: Build empathy and communication skills to guide students more holistically.

The Frustrated Employee

Navigates workplace tension but lacks safe ways to process emotion and rehearse responses before real conversations happen.

Goal: Gain confidence and improve communication through guided immersive practice.

The Reflection-Seeking Learner

Feels overwhelmed by complex theory and wants a clearer, more hands-on way to build conflict-resolution skill over time.

Goal: Track growth through guided MR sessions and simple progress feedback.

Core Scenario Types

- • Academic advising conflicts and power dynamics

- • Workplace feedback and collaboration tensions

- • Social misinterpretations involving tone and intent

- • Follow-up reflection and progress review

- • Safe role-swapped simulations before real-life conversations

- • Future expansion into team conflict and broader workplace contexts

Conversation Design

The hardest design problem in Pathic wasn’t spatial — it was conversational. Building an avatar that could sustain emotionally coherent dialogue across an unpredictable role-reversal scenario required a three-layer architecture: a branching narrative structure, a grounded knowledge retrieval system, and a carefully engineered prompt objective that kept the avatar in character without breaking the user’s psychological safety.

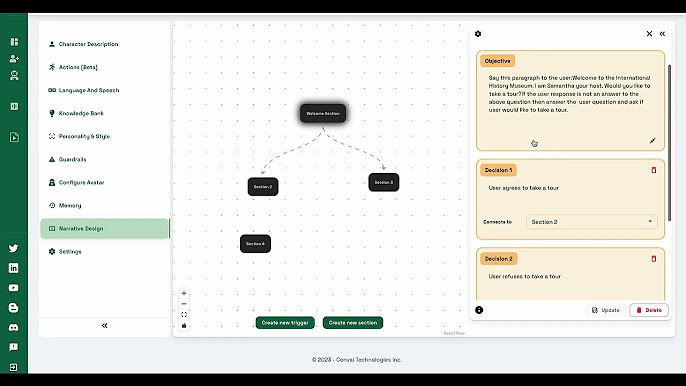

Layer 1: Narrative Design — Branching Conversation Trees

Tool: Convai Narrative Design Editor

Every scenario in Pathic was architected as a branching conversation tree inside Convai’s Narrative Design editor. Each node represents a discrete conversational moment: an Objective (what the avatar says or does to open a section), followed by Decision branches that route the conversation based on user intent.

In the academic advising scenario, the opening node greets the user as a museum host and forks into two branches: the user accepts a tour (routes to Section 2) or refuses (routes to Section 3 - a recovery path designed to re-engage without pressure). This structure ensures the avatar always has a contextually appropriate response regardless of what the user says, preventing the immersion-breaking dead ends that kill role-play simulations.

The Convai Narrative Design editor: the Welcome Section objective branches into two user decisions - accept or refuse - each routing to a different section of the conversation graph. No user path leads to a dead end.

Objective Nodes

Each section opens with a prompt that sets the avatar’s emotional register and communicative intent. Not a rigid script — a behavioral anchor the LLM improvises within.

Decision Branches

Decision nodes capture the most likely user responses at each fork. The NLU layer interprets utterances against these labels - routing the tree even when the user’s exact words don’t match.

Recovery Sections

A refusal branch doesn’t terminate - it routes to a re-engagement section. Every dead end was redesigned as a psychologically safe re-entry point.

Layer 2: Knowledge Banks - RAG for Grounded, Hallucination-Free Dialogue

Architecture: Retrieval-Augmented Generation via Convai Knowledge Bank

The core risk in AI-driven role-play is hallucination: the avatar fabricating details that break scenario consistency or undermine psychological safety. To solve this, we used Convai’s Knowledge Bank as a retrieval-augmented generation layer - giving the avatar a curated factual foundation to draw from before generating any response.

Each scenario’s Knowledge Bank was populated with scenario-specific context: persona background, institutional policies, the student’s situation, and the emotional subtext of the conflict. When a user asks “Why wasn’t I told about this deadline?” - the system retrieves relevant context before generating a response, rather than confabulating from general training data. This is the same RAG architecture used in enterprise AI products, applied here to target psychological consistency rather than just factual accuracy.

Persona Context

Character background, role, communication style, and emotional state entering the conversation.

Scenario Facts

Institutional policies, deadlines, prior interactions, and factual context that ground the conflict in reality.

Emotional Subtext

What the character feels but hasn’t said — unspoken frustration, fear, or need beneath the surface conflict.

Guardrails

What the avatar must never say — topics that break psychological safety or terminate the scenario prematurely.

Behavioral intent lives in the objective prompt. Factual and emotional grounding lives in the Knowledge Bank. Keeping these separate means the LLM can improvise naturally within its behavioral anchor while retrieving accurate, scenario-specific context on demand - the same separation of concerns that makes production RAG systems reliable.

Layer 3: The Runtime Loop - What the User Actually Experiences

At runtime the three layers combine into a seamless experience. The narrative tree determines which section the avatar is in. The Knowledge Bank grounds what it knows. The LLM generates the actual words - improvising within the objective’s behavioral anchor.

Scene Cue

Spatial environment and avatar establish emotional register. The user knows what kind of conversation they’re entering before they speak a word.

User Utterance

The user speaks naturally. NLU interprets intent against the current decision branches and routes the narrative tree accordingly.

RAG-Grounded Response

Avatar retrieves from Knowledge Bank, generates within the objective anchor, delivers with synchronized expression and pacing. No hallucination.

Reflection Prompt

At key branch points, a coaching prompt surfaces - a short question that makes the user notice what just happened emotionally before continuing.

The Three Hardest Conversation Design Calls

How directive should the objective prompt be?

Too prescriptive and the avatar sounds scripted, breaking immersion. Too open and it hallucinates outside the scenario. The answer: behavioral anchors that define emotional posture and intent without scripting words.

When does a dead end become a recovery path?

Early builds let users reach conversational dead ends where the avatar exited character. Every dead end was redesigned as a re-engagement section - a psychologically safe way back into the scenario.

What belongs in the Knowledge Bank vs. the prompt?

Behavioral intent → objective prompt. Factual and emotional grounding → Knowledge Bank. Keeping these separate lets the LLM improvise naturally while still retrieving scenario-specific context on demand.

System Architecture

Input Layer

- • Voice input capture

- • Gaze raycasts

- • Spatial XR interactions

- • Proximity detection

Interpretation

- • Convai LLM processing

- • Emotion classifier

- • Narrative state logic

- • Branch management

Response Generation

- • Real-time avatar speech

- • Facial expressions

- • State-driven animations

- • Natural prosody

Spatial Layer

- • Room-scale MR layout

- • Passthrough grounding

- • Anchored placement

- • Proximity transitions

Competitive Landscape

Competitive analysis revealed that most platforms address one dimension of the problem - guidance, wellness support, or scripted practice - but none combine embodied role-switching, adaptive AI dialogue, and structured reflection in a single facilitator-free system.

Talespin and Mursion come closest on immersion, but neither supports true perspective-switching and both require human facilitators - adding cost and scheduling friction that limits scalable deployment. Pathic's combination of embodied role reversal + adaptive AI dialogue + structured reflection + autonomous sessions is not replicated anywhere.

Interaction Flows & Learning Loop

Pathic is not just an immersive scene. It is a learning loop designed to move the user from orientation to practice to reflection to follow-up.

User Flow 1: Empathy Mirror Simulation

- • MR headset login

- • Dashboard navigation

- • Scenario setup

- • Immersive role-switching simulation

- • Reflective debriefing

- • Follow-up resources

User Flow 2: Follow-up & Progress Tracking

- • Open progress dashboard

- • Review session insights

- • Upload reflection notes

- • Submit progress

- • Receive recommendations

Why These Flows Matter

The debrief and follow-up structure is what pushes Pathic toward learning experience design. It makes the system less like a one-time XR demo and more like a repeatable skill-building product.

Technical Implementation

What Worked

- • Role-shift mechanic created genuine perspective shifts

- • Emotion-driven pacing improved realism

- • Voice-recognition stability supported natural interaction

- • Avatar gaze correction improved presence

- • Spatial anchor realignment solved drift

Voice UX

- • Latency handling for natural feel

- • Turn-taking and interruption management

- • Interruptible responses

- • Confidence fallback (“Let me rephrase…”)

Lessons Learned

- • Emotional UX is harder than technical UX

- • Timing and pacing shape emotional tone more than content alone

- • Conversation must adapt, not dominate

- • Safety is foundational, and control enables vulnerability

Final Solution

Pathic MR delivers a complete empathy training experience: fully interactive AI-driven MR conversations that respond dynamically to user input and emotional state. As a learning experience, it combines embodied perspective-taking, emotional mirroring, adaptive simulation, and reflection to help users build conflict-resolution skill through practice rather than theory alone.

Role-Shifting Perspective

Experience the same conflict from multiple viewpoints, creating genuine understanding rather than only intellectual agreement.

Emotional Mirroring

Avatars respond with appropriate nuance to user behavior throughout extended training sessions, reinforcing emotional awareness in context.

Adaptive Simulation

Adjusts difficulty and intensity based on user responses and training objectives, supporting more relevant and transferable practice.

If I Were PM: What Comes Next

The research prototype proved the concept and the learning loop. Turning Pathic into a real product requires a clear north star, a prioritized next build, and an honest look at the hardest unsolved risk.

North Star Metric

Behavior transfer rate - percentage of users who report applying a conflict insight from a Pathic session to a real conversation within 7 days.

Why not session completion or satisfaction scores? Because Pathic’s value proposition is behavior change, not entertainment. If people finish sessions but don’t change how they handle conflict, the product hasn’t worked. Transfer is the only metric that proves the learning system is real.

One Thing to Build Next

A structured post-session debrief with manager visibility. Not because it’s the most technically interesting feature - because it’s the unlock for institutional B2B sales.

HR and L&D buyers need to demonstrate ROI. A debrief that generates a structured insight report - shareable with a manager or coach - turns Pathic from a personal tool into an organizational training asset. That’s what justifies a per-seat enterprise license.

Biggest Risk to De-Risk First

AI emotional consistency over extended sessions. Convai delivers responsive dialogue, but emotional tone drift - where the AI character’s affect gradually desynchronizes from the scenario’s emotional arc - is a real risk that we haven’t stress-tested at depth.

A single tonally inconsistent response at a moment of high emotional stakes can break the psychological safety that the entire system is built on. Longitudinal emotional consistency testing across 20+ minute sessions is the critical pre-launch validation.

Go-to-Market Path

Phase 1 — Institutional Pilot

Partner with 2–3 university HR or L&D departments for a controlled pilot. Measure transfer rates, session completion, and facilitator time saved. Generate the case study that unlocks enterprise sales conversations.

Phase 2 — Scenario Expansion

Expand beyond academic advising into workplace feedback, performance reviews, and team conflict. Each new scenario type opens a new buyer segment: corporate L&D, healthcare communication training, legal mediation prep.

Phase 3 — Mobile AR Bridge

Port core scenarios to mobile AR to remove the hardware barrier for individual users and smaller organizations. This expands addressable market from enterprises with headset budgets to any organization with smartphones.

Reflections

Pathic MR taught me that designing for emotion is fundamentally different from designing for utility. Technical systems succeed when they work reliably. Emotional systems succeed when they feel authentic, and authenticity emerges from restraint, timing, reflection, and psychological safety.

Working with conversational AI revealed how much human communication depends on non-verbal cues that language models do not fully capture. The challenge was not simply generating plausible text. It was aligning generated dialogue, avatar behavior, pacing, spatial positioning, and reflection design into a coherent learning experience.

This project also clarified my identity as a learning experience designer working through emerging technology. The real product value is not the headset or the AI alone. It is the learning system: safe practice, embodied perspective-taking, guided reflection, and stronger transfer into real-world behavior.

Core Insight

Empathy can be trained, but training requires embodiment. Telling people to understand others does not work. Creating safe spaces where they can inhabit another person’s perspective, reflect, and try again creates learning that sticks.